SelfPose: 3D Egocentric Pose Estimation From a Headset Mounted Camera

PubDate: October 2020

Teams: University College London;Technical University of Braunschweig;Facebook Reality Lab;Max Planck for Intelligent Systems;Carnegie Mellon University

Writers: Denis Tome; Thiemo Alldieck; Patrick Peluse; Gerard Pons-Moll; Lourdes Agapito; Hernan Badino; Fernando de la Torre

PDF: SelfPose: 3D Egocentric Pose Estimation From a Headset Mounted Camera

Abstract

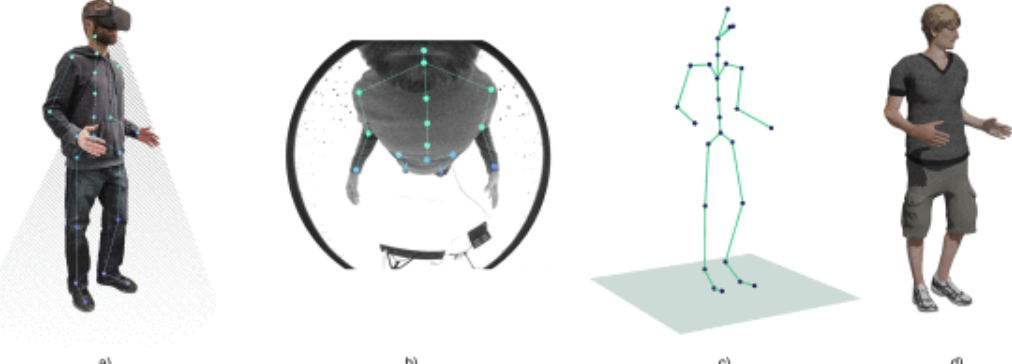

We present a new solution to egocentric 3D body pose estimation from monocular images captured from a downward looking fish-eye camera installed on the rim of a head mounted virtual reality device. This unusual viewpoint leads to images with unique visual appearance, characterized by severe self-occlusions and strong perspective distortions that result in a drastic difference in resolution between lower and upper body. We propose a new encoder-decoder architecture with a novel multi-branch decoder designed specifically to account for the varying uncertainty in 2D joint locations. Our quantitative evaluation, both on synthetic and real-world datasets, shows that our strategy leads to substantial improvements in accuracy over state of the art egocentric pose estimation approaches. To tackle the severe lack of labelled training data for egocentric 3D pose estimation we also introduced a large-scale photo-realistic synthetic dataset. x R-EgoPose offers 383K frames of high quality renderings of people with diverse skin tones, body shapes and clothing, in a variety of backgrounds and lighting conditions, performing a range of actions. Our experiments show that the high variability in our new synthetic training corpus leads to good generalization to real world footage and to state of the art results on real world datasets with ground truth. Moreover, an evaluation on the Human3.6M benchmark shows that the performance of our method is on par with top performing approaches on the more classic problem of 3D human pose from a third person viewpoint.