Exploring the Use of a Robust Depth-sensor-based Avatar Control System and its Effects on Communication Behaviors

PubDate: November 2019

Teams: University of Canterbury,Beijing Institute of Technology

Writers: Yuanjie Wu, Yu Wang, Sungchul Jung, Simon Hoermann, Robert W. Lindeman

Abstract

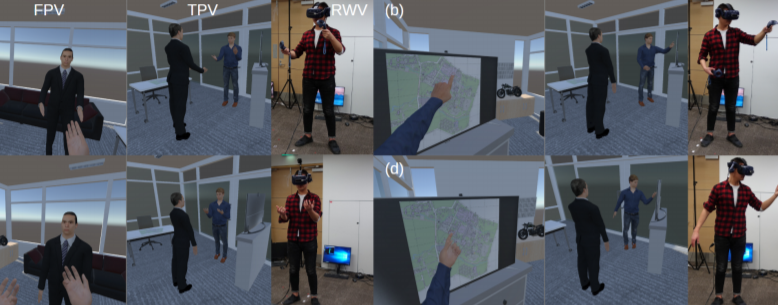

To interact as fully-tracked avatars with rich hand gestures in Virtual Reality (VR), we often need to wear a tracking suit or attach extra sensors on our bodies. User experience and performance may be impacted by the cumbersome devices and low fidelity behavior representations, especially in social scenarios where good communication is required. In this paper, we use multiple depth sensors and focus on increasing the behavioral fidelity of a participant’s virtual body representation. To investigate the impact of the depth-sensor-based avatar system (full-body tracking with hand gestures), we compared it against a controller-based avatar system (partial-body tracking with limited hand gestures). We designed a VR interview simulation for a single user to measure the effects on presence, virtual body ownership, workload, usability, and perceived self-performance. Specifically, the interview process was recorded in VR, together with all the verbal and non-verbal cues. Subjects then took a third-person view to evaluate their previous performance. Our results show that the depth-sensor-based avatar control system increased virtual body ownership and also improved the user experience. In addition, users rated their non-verbal behavior performance higher in the full-body depth-sensor-based avatar system.