Augmenting Virtual Reality with Near Real World Objects

PubDate: August 2019

Teams: University of Applied

Writers: Michael Rauter; Christoph Abseher; Markus Safar

PDF: Augmenting Virtual Reality with Near Real World Objects

Abstract

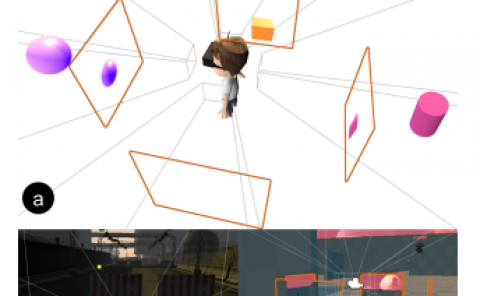

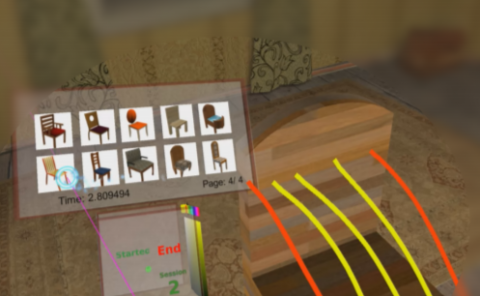

Pure virtual reality headsets lack support for user awareness of real world objects. We show how to augment a virtual environment with real world objects by incorporating color and stereo information from the front-mounted stereo camera system of the HTC Vive Pro. With an adjustable amount of virtual and real elements by specifying the depth range of interest for real objects these can be either always augmented on top of the virtual reality or embedded in the virtual world with correct occlusion. Possible use cases are training with appliances that require you to see both the appliance as well as your hands, collaborating in virtual reality with the real users instead of avatars, as well as static and moving obstacle detection. Objects of interest are cut out depending on depth values of the stereo matching system’s depth image. The depth image matches only one of both camera sensors so a second depth image is derived to match the other camera sensor as well. We overlay the rendered virtual reality images for both eyes with the corresponding cut-out camera images. Furthermore we address the problem of missing depth data especially for very near objects with an integral image based depth-dependent foreground mask expansion algorithm. Since a comfortable mixed reality experience requires high framerates of optimally 90 frames per second we utilize the GPU for optimized implementations of the algorithms and computations involved.